|

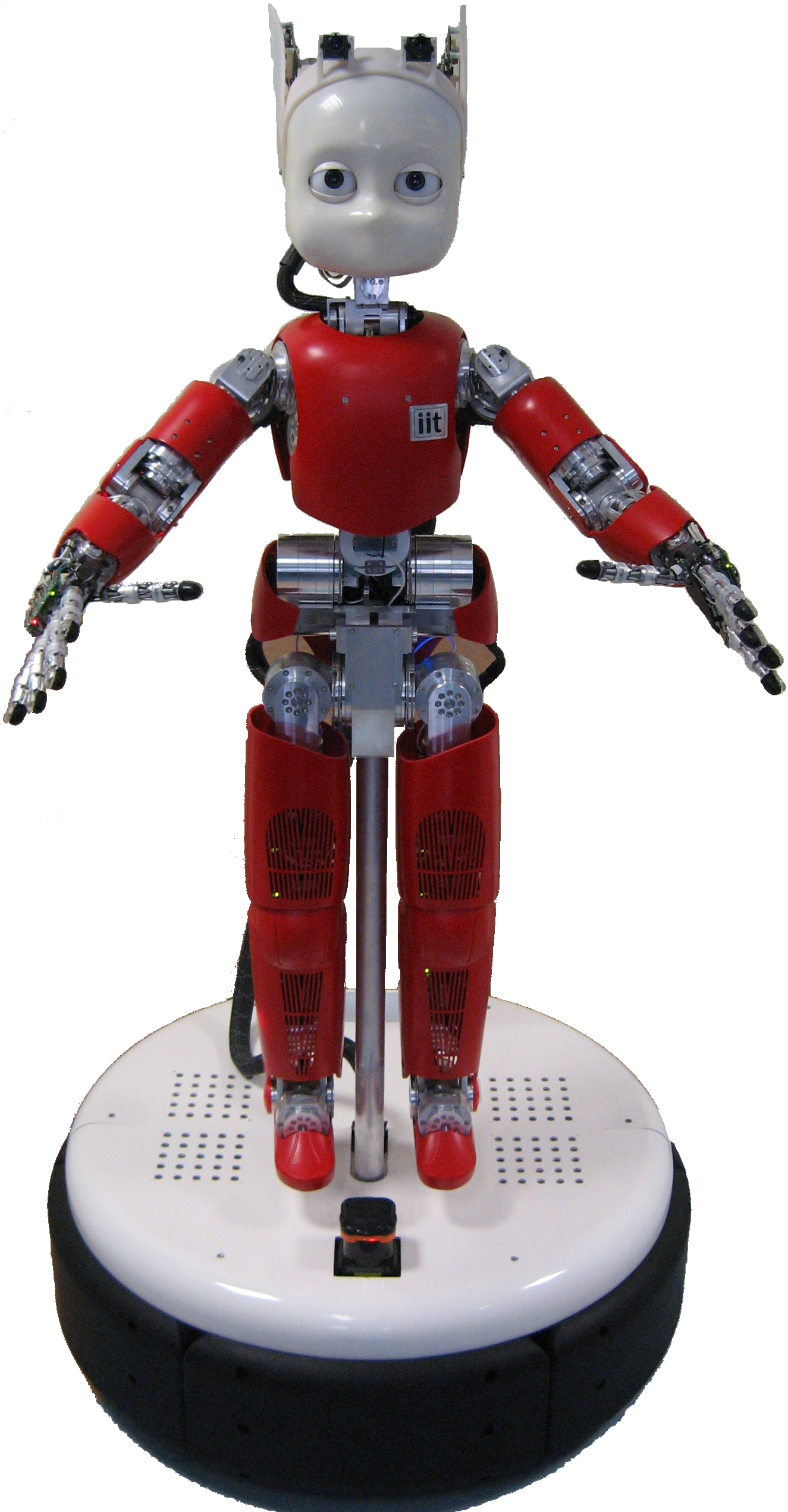

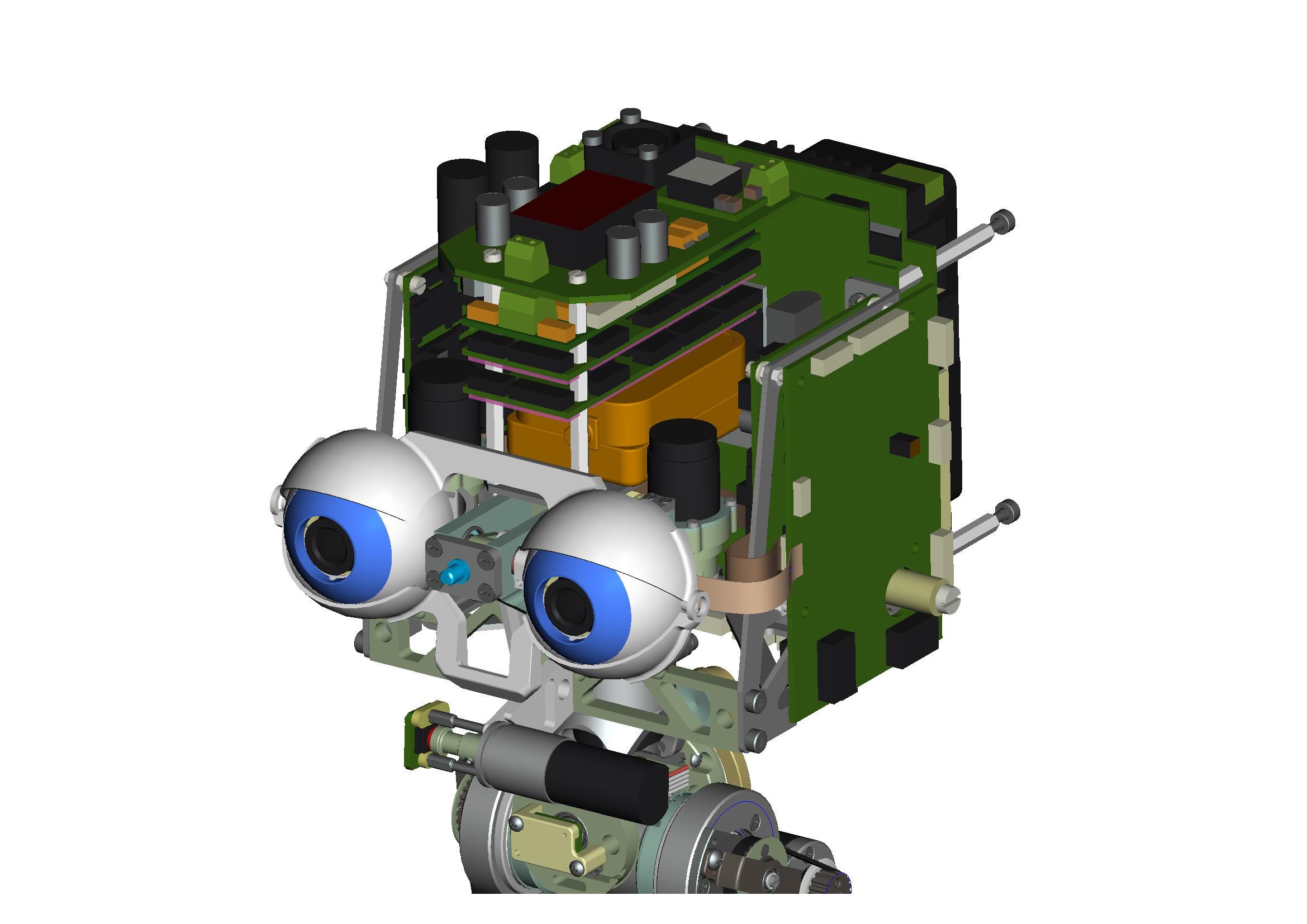

The first prototype of humanoid robot with event-driven vision system has been realized and is now available for exploring the potentials of bio-inspired vision on real-world complex tasks such as navigation and manipulation.

The full humanoid robot is equipped with the well-known Dynamic Vision Sensor, dedicated AER embedded hardware and a holonomic wheeled vehicle. The path to this precious result went through the development of the diverse modules composing the system and the collaboration among the different disciplines that interplay in the integration. We first accurately defined communication standards and infrastructure, in this respect, although many AER protocols have been developed in recent years, eMorph has formed a discussion group with many other researchers working on AER chips and derived specifications that are both cost effective and which can be used in a wide range of different applications.

While developing the necessary technology for integrating the full system, the eMorph consortium worked on the development of procedures and algorithms for event-driven visual computation and new asynchronous vision sensors. On the algorithms side, a collaboration with experts in computer vision at the Institut des Systèmes Intelligents et de Robotique and Institut de le Vision (Université Pierre et Marie Curie, Paris) has been established that delivered a method for computing with events. Specifically the event-driven implementation of a classical method for the computation of the optical flow is now implemented and running on the robot. On the neuromorphic chip design side, eMorph developed two sensors, tackling the trade-off between on-chip processing and complexity accompanied by loss of resolution from the two extreme opposites, the “Tracker Motion Sensor” and the “Asynchronous Space Variant” sensor.

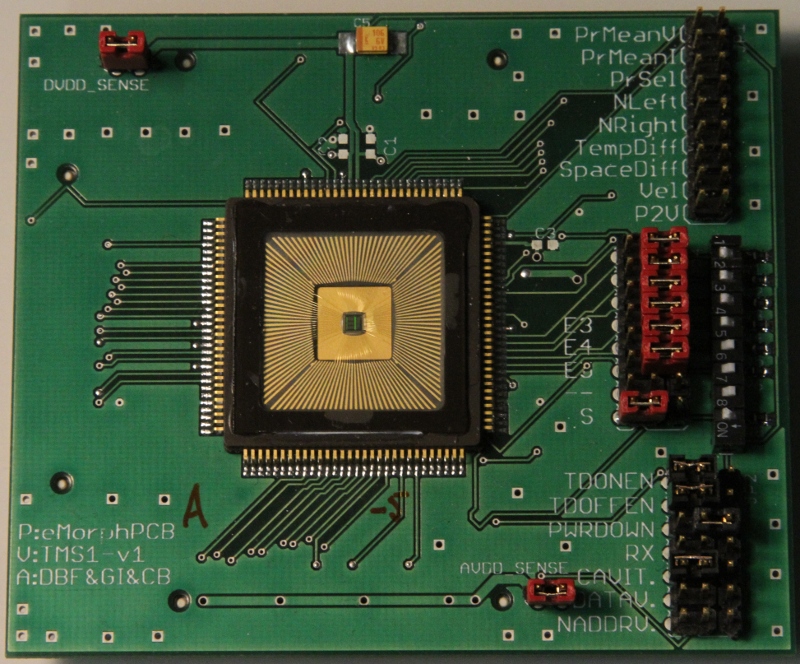

The “Tracker Motion Sensor” (TMS) is a custom analog/digital VLSI chip that implements an 1D intelligent vision sensor able to measure the contrast, spatial, and temporal derivative of edges moving in its field of view, as well as their absolute velocities. In addition, it comprises circuits that implement a model of “selective attention” which endow the ability to select and report the spatial location of the most “salient” position in the field of view. The “saliency” of the target is defined as a weighted sum of spatial derivative, temporal derivative, and velocity intensities. The selection of a winner in the competition stage represents an “event” and is signaled off-chip via a dedicated “data-valid” digital output. The position of the winning location is reported off-chip using both analog position-to-voltage circuits, as well as digital encoders. The contrast information and spatio/temporal derivative measurements can be read-off each pixel using digital address-decoders and buffers. By combining the chip output signals with an intelligent event-driven monitoring procedure (e.g. through an ordinary micro-controller) it is therefore possible to selectively monitor only regions of the visual field where salient events take place. The TMS has been simulated, designed and tested; a new generation is currently being designed that solves some criticisms on current implementation.

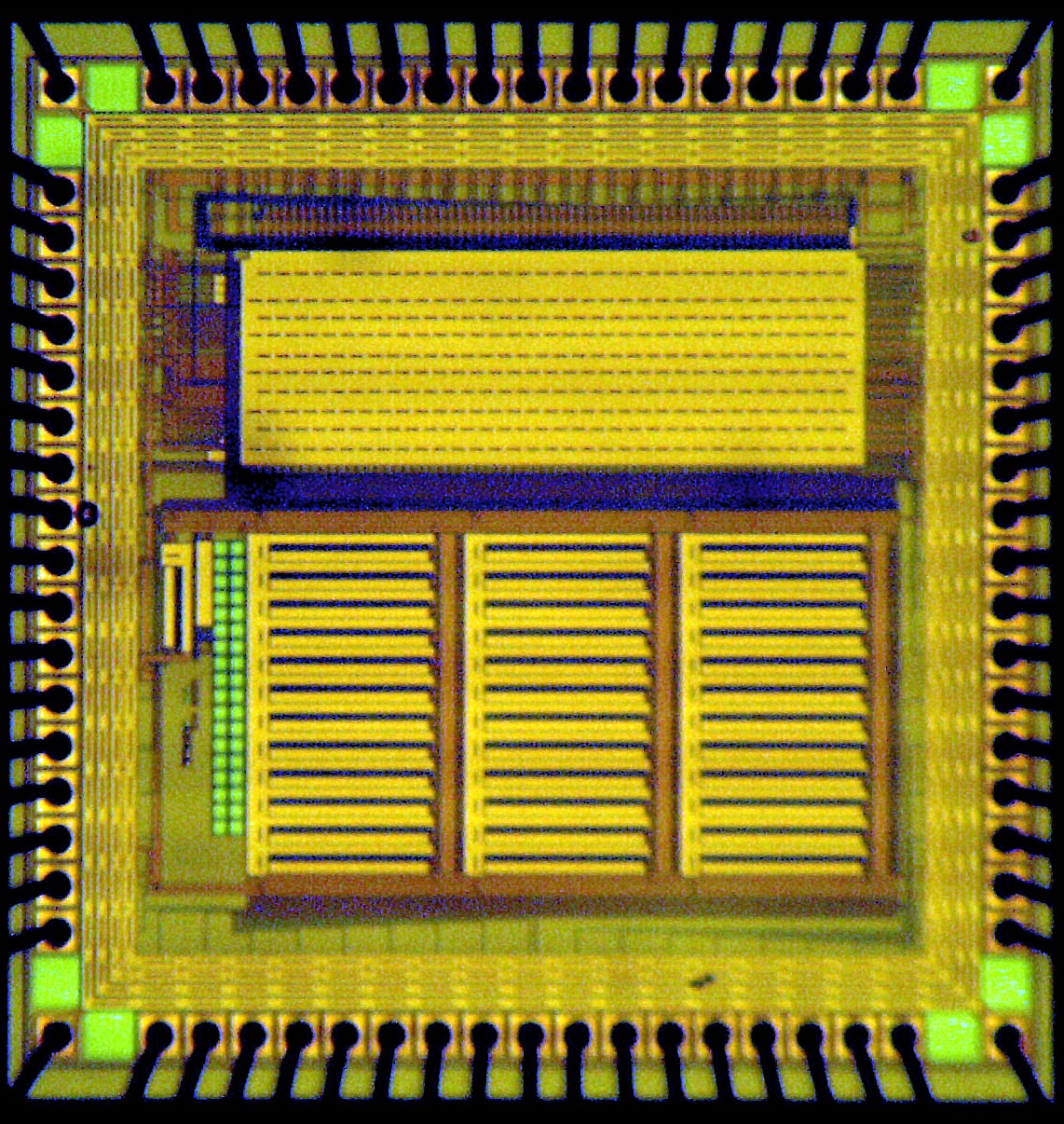

The “Asynchronous Space Variant” (ASV) vision sensor is an AER sensor that responds to the need for the concurrent evaluation of temporal and spatial derivative of the visual input highlighted during the development of the event-driven optical flow algorithm. Its design builds on the DVS and on the successive Asynchronous Time-based Image Sensor (ATIS) sensor (2). The former reports the temporal derivative of the light contrast in form of asynchronous digital pulses associated with the identity (or address) of the pixel that responded to the light variation. The second, in addition to the information about the variation of the image contrast, reports the absolute light intensity corresponding to the detection of the variation, in form of InterSpike Interval duration (or pulse width modulation) of the pulses that report the location of the active pixel. The ASV reports the absolute light intensity at four locations within each pixel, allowing the computation of the spatial derivative as difference between top/bottom and left/right locations. One of the design specifications of the ASV is the space-variant topology of photoreceptors, with a high resolution central region (fovea) and a periphery with degrading resolution. This design optimizes the trade-off between amplitude of the field of view and number of pixels, while maintaining the resolution in the central part of the sensor. The periphery has lower resolution and is used to detect possibly interesting objects that can be scrutinized in detail by the central part of the sensor. This approach goes toward the relaxation of the trade-off between sensor resolution and processing capabilities, by allowing the implementation of additional processing in the lower resolution peripheral pixels. A possible example could be the introduction of motion or attentional circuits tested in the TMS sensor; in the realized prototype the peripheral pixels implement leaky integration as a form of de-noising circuits needed for the evaluation of the optical flow.

Taken together, the results of the first two years of the project give good premises for the successful development of a robust and sophisticated asynchronous visual system for exploiting and demonstrating the advantages of the neuromorphic approach in robotics. |

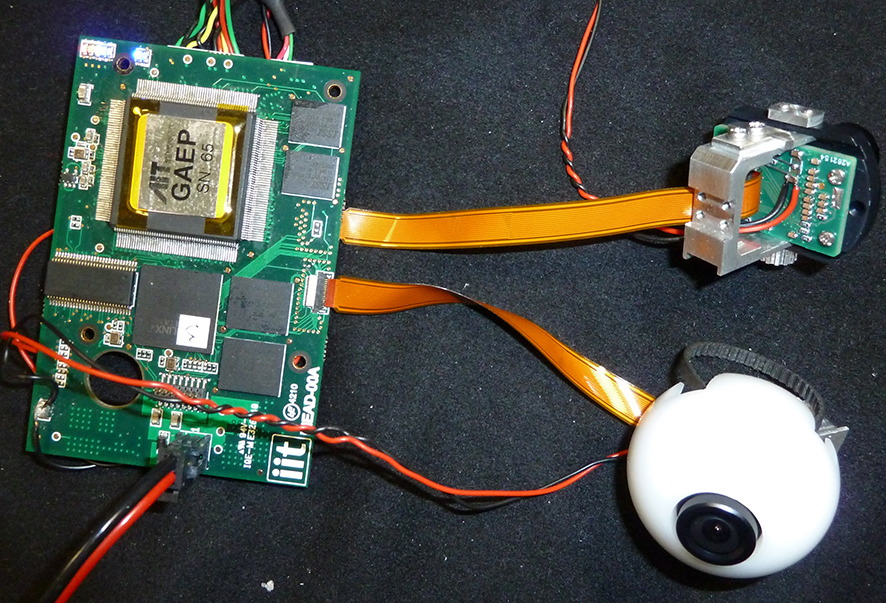

This Interface is defined for static point to point communication between different boards. In addition it can be used to support dedicated units performing the routing of address-event data to and from different point to point communication lines. Thanks to this work the eMorph project could deliver a custom AER board for reading data from two AER chips, pre-process them and finally route them to standard processors by means of a USB connection, or send them to post-processing AER chips by means of a serial line. The board integrates two AER dedicated hardware components such as an FPGA for merging and routing AER data and the General Address Event Processor (GAEP), a SPARC-compatible LEON3 core based processor with a custom data interface for asynchronous sensor data. Its functional blocks include data transfer and protocol handshake, data rate measurement, data filtering, time-stamp assignment and input data buffer management. The GAEP has been tested and a full library of functions is now available for programming event-driven algorithms that will run on the embedded processor. Finally, the sensory data are uploaded on the robot’s YARP middleware by specific software modules, enabling any other module of the robotic platform to access the data and perform calculations.

This Interface is defined for static point to point communication between different boards. In addition it can be used to support dedicated units performing the routing of address-event data to and from different point to point communication lines. Thanks to this work the eMorph project could deliver a custom AER board for reading data from two AER chips, pre-process them and finally route them to standard processors by means of a USB connection, or send them to post-processing AER chips by means of a serial line. The board integrates two AER dedicated hardware components such as an FPGA for merging and routing AER data and the General Address Event Processor (GAEP), a SPARC-compatible LEON3 core based processor with a custom data interface for asynchronous sensor data. Its functional blocks include data transfer and protocol handshake, data rate measurement, data filtering, time-stamp assignment and input data buffer management. The GAEP has been tested and a full library of functions is now available for programming event-driven algorithms that will run on the embedded processor. Finally, the sensory data are uploaded on the robot’s YARP middleware by specific software modules, enabling any other module of the robotic platform to access the data and perform calculations.